Browsing with a mobile screen reader

Posted on by Henny Swan in User experience

In our second post from our browsing with assistive technology series, we discuss mobile screen readers.

You can also explore browsing with desktop screen readers, browsing with a keyboard, browsing with screen magnification and browsing with speech recognition.

A screen reader is a software application that announces what is on screen to people who cannot see, or who cannot understand, what is displayed visually. They provide access to the entire operating system and applications including browsers and web content. There are screen readers available on all popular platforms including Windows, macOS, iOS and Android.

Who uses a mobile screen reader

People who are blind or partially sighted use a screen reader, as well as people with cognition or learning disabilities.

People who are blind and read Braille may use a screen reader in combination with a refreshable Braille display. A refreshable Braille display is an external piece of hardware made up of rows of pins that can be formed into the shape of a Braille character. People can use Braille in combination with speech output or without it. Braille displays are a useful tool if people are in meetings or in a noisy room where listening to the speech output is not convenient.

Most touch devices support screen magnification in combination with screen readers. This enables people with partial sight, cognition or learning disabilities to use a combination of speech and sight. For example, on iOS Zoom can be used to enlarge everything on screen or the magnifier can be used to enlarge parts of the screen while VoiceOver reads content.

Popular mobile screen readers

There are screen readers available on all popular mobile platforms:

- Android Talkback, Samsung Voice Assistant and Amazon Voice View

- iOS VoiceOver

The WebAIM screen reader user survey in 2021 notes that the most popular mobile platform, and therefore screen reader, is iOS VoiceOver with 72% usage, then Android with 25.8% and the rest at 2.2%.

The following mobile browser combinations are the most commonly used on iOS and Android respectively:

- VoiceOver with Safari

- TalkBack with Chrome

What mobile screen readers announce

Like desktop screen readers, mobile screen readers announce everything on a web page and within an application.

All static text is spoken including paragraphs of text, headings and lists. Screen readers also announce hidden text such as text descriptions for images, visually hidden text, and the names of landmark regions (for example banner, main and footer) when browsing web content.

Mobile screen readers will also identify the role of an element for example link, button, image, slider, toggle button, etc.

On iOS there is an additional feature where a "hint" can be announced with an element. This acts as an audible tooltip, by describing the action an element completes. For example, "View, button, displays book detail", where "displays book detail" is the hint. It is possible to switch support for hints on or off so they should never be relied on for primary content.

Navigating with mobile screen readers

When a mobile screen reader is enabled on a touch-screen device all the gestures change. This is because the mode of interaction changes when a screen reader is enabled. Instead of visually scanning the screen and tapping once on something to activate it, it is necessary to scan the screen by touch and then double tap to activate it.

Transcript

[The TetraLogical logo whooshes into view on a white background. The logo flashes and stops with a sonar-like 'ping'. It then magnifies and fades out.]

[A dark purple background appears with the TetraLogical logo faintly overlaid]

Browsing with a mobile screen reader.

Like desktop screen readers, mobile screen readers announce everything on a web page and within an application.

All static text is spoken including paragraphs of text, headings and lists.

Screen readers also announce additional information, such as text descriptions for images, visually hidden text, and the names of landmark regions (for example banner, main, and footer) when browsing web content.

[An image of the TetraLogical homepage appears on a mobile device being held in hand. The logo is displayed at the top, then below it a horizontal list of links for main navigation. The main heading is below that, and the body of the page content fills the rest of the screen]

When a mobile screen reader is enabled on a touch-screen device, all the gestures change.

Instead of visually scanning the screen and tapping once on something to activate it, people will scan the screen by touch, and move their screen reader focus sequentially, in a linear fashion, through elements on the screen.

[The hands holding the device navigate through the elements on screen]

Swipe right to move focus to the next item. Swipe left to move focus to the previous item.

In addition, mobile screen readers also let you "explore by touch", dragging a finger over the screen.

[As the user drags their finger, a green visible focus outline highlights content as it moves. Text is displayed at the bottom of the screen as the screen reader reads content aloud]

The screen reader will focus and announce each item you touch.

This can be useful in situations where an item can't be reached through normal navigation, but can be quite inefficient.

[The user moves the visible focus to the "News" page. They double-tap elsewhere on the screen and the News page opens]

Once a mobile screen reader is focused on an item, double-tapping anywhere on the screen will activate it.

[The TetraLogical homepage on a mobile screen is displayed on a purple background]

On both Android and iOS it is possible to navigate by a specific type of element.

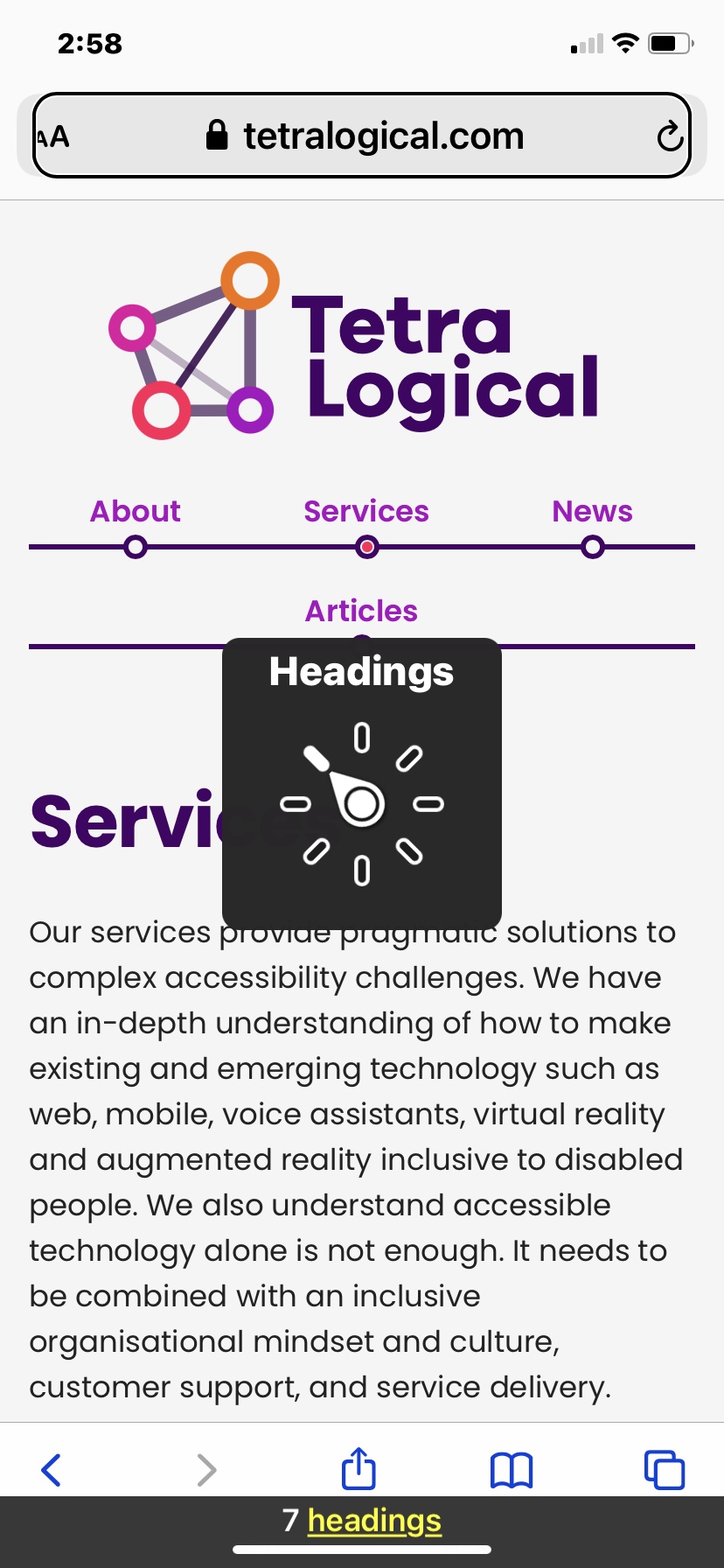

On iOS, the VoiceOver rotor makes it possible to access different configuration settings and to navigate applications and web pages by different elements.

Moving two fingers clockwise opens the rotor where you can choose what types of element you want to navigate between.

[The Voiceover rotor appears on screen with 8 options displayed in a dial. As the user navigates and each new item is announced, the dial moves clockwise through the options]

[VoiceOver] Characters, Words, Lines, Speaking rate, 60%, Containers, Headings, 5 headings

[The rotor disappears]

Once a type of element is selected, swiping up or down moves focus between the elements of that particular type.

For example, while browsing a website, if headings are selected in the rotor, then swiping up or down moves focus from heading to heading.

[The visible focus moves from heading to heading as the user navigates down the page and each heading is read aloud]

[VoiceOver] Hello, we're TetraLogical, Heading level 1, News, heading level 2, Blog, heading level 2, Contact us, heading level 2.

[The TetraLogical homepage on a mobile screen reappears. The visible focus is now an outline over the entire screen]

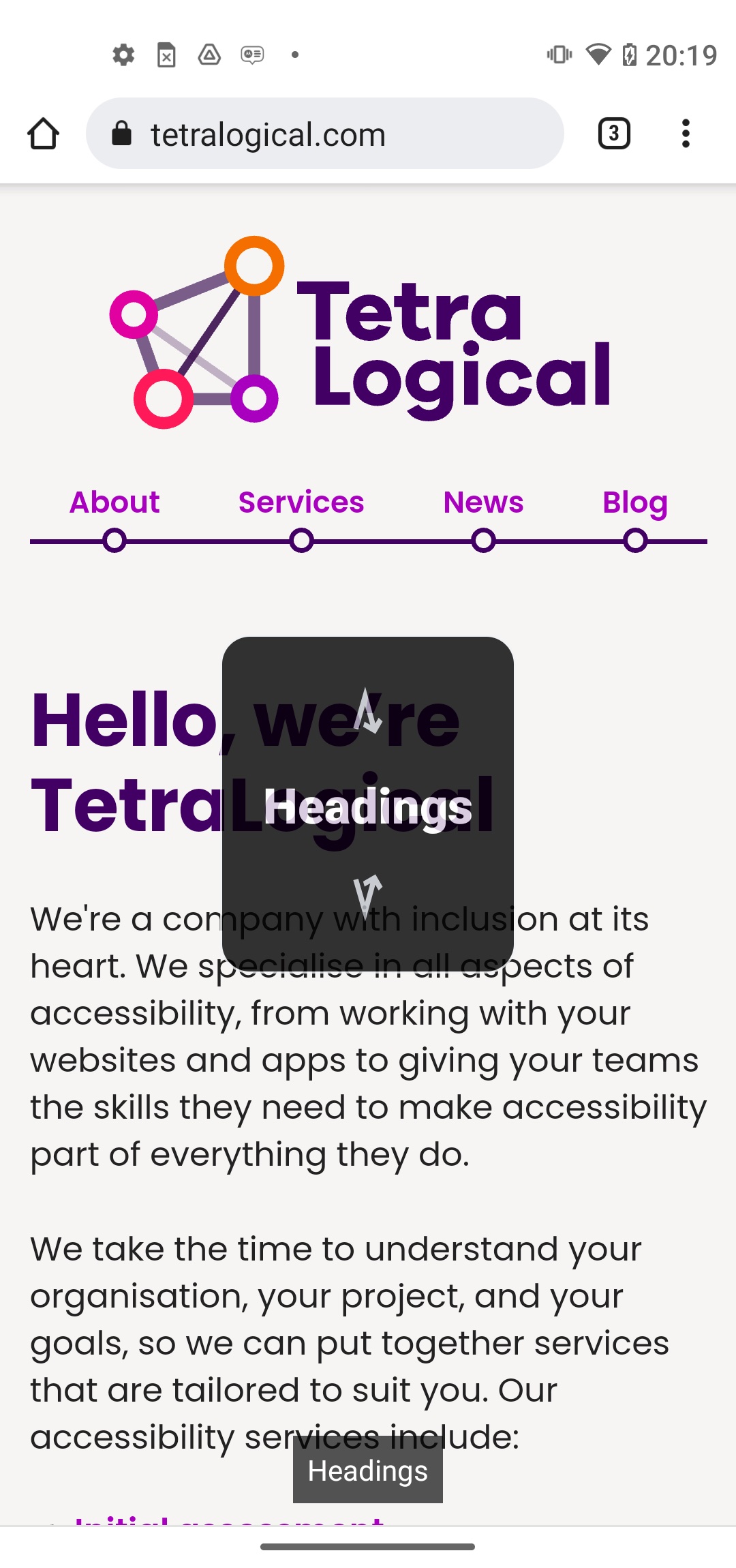

On Android you can swipe down then up in one gesture to cycle between TalkBack navigation options.

[V-shaped arrows pointing up and down appear on screen, they are displayed above and below each the word being announced]

[TalkBack] Characters, Words, Lines, Headings, Swipe up or swipe down to read by headings

[The visible focus outline stops on the main heading]

Once an option is selected, such as headings, a single swipe, either up or down, moves focus between each heading.

[The visible focus outline moves down the page as the user navigates]

[TalkBack] Hello, we're TetraLogical, Heading 1, News, heading 2, Blog, heading 2.

All screen readers can be customised to suit people's preferences. Probably the most common setting people change is the rate of speech output.

[The VoiceOver rotor reappears with "Speaking Rate" selected in the dial]

Many people who use screen readers on a daily basis listen to the speech output very fast.

This is similar to how someone who is sighted might skim read or read fast in their head.

The speech rate can be so fast that output is almost impossible to follow for people unaccustomed to screen readers.

As an example, here we change the speaking rate in iOS VoiceOver from 60% to 100%, and then read through content on the TetraLogical website's homepage.

[The "Speaking Rate" overlay disappears]

[VoiceOver] Speaking Rate, 60%, 65%, 70%, 75%, 80%, 85%, 90%, 95%, 100%

[VoiceOver, speaking at a very high rate] We take the time to understand your organisation, your project, and your goals, so we can put together services that are tailored to suit you. Our accessibility services include...

These are some of the high level details about mobile screen readers, and common strategies that people browsing with a mobile screen reader use.

[The screen fades to white and the TetraLogical logo appears again]

To find out more about accessibility visit tetralogical.com.

Standard navigation

The most common approach to navigating with a mobile screen reader is to swipe through content sequentially.

- Swipe right to move focus to the next item

- Swipe left to move focus to the previous item

In addition, mobile screen readers also let you "explore by touch", dragging a finger over the screen. The screen reader will focus and announce each item you touch. This can be useful in situations where an item can't be reached through normal navigation, but can be quite inefficient.

Once a mobile screen reader is focused on an item, double-tapping anywhere on the screen will activate it.

Navigating specific elements

On both Android and iOS it is possible to select the type of element to navigate by.

The VoiceOver rotor makes it possible to access different configuration settings and to navigate applications and web pages by different elements. Moving two fingers clockwise opens the rotor where you can choose what types of element you want to navigate between:

- In applications this includes headings, words, characters, containers and lines

- When browsing this includes headings, words, characters, containers, lines, links, and form controls

Once a type of element is selected, swiping up or down moves focus between the selected item. For example if headings are selected in the rotor then swiping moves focus from heading to heading. For more information visit VoiceOver gestures on iPhone (note these also apply to iPads as well).

On Android you can swipe down then up in one gesture to cycle between TalkBack navigation options. Options include characters, words, lines, paragraphs, headings, controls and links. Once an option is selected, such as headings, a single swipe either up or down moves focus between each heading. For more information visit navigating your device with TalkBack.

Android TalkBack gestures can vary between versions, and between different devices, so it is always worth following the TalkBack tutorial available in accessibility options in settings. iOS VoiceOver also has a built-in tutorial in settings.

Speech output rate

All screen readers can be customised to suit people's preferences. Probably the most common setting people change is the rate of speech output.

Many people who use screen readers on a daily basis listen to the speech output very fast. This is similar to how someone who is sighted might skim read or read fast in their head. The speech rate can be so fast that output is almost impossible to follow for people unaccustomed to screen readers. This is why it is important to prioritise keywords first in links, headings and sentences.

The difference between browsing the web and browsing applications

According to the WebAIM screen reader user survey in 2021, people who use mobile screen readers are slightly more likely to use a mobile app than a web site to carry out tasks.

It's hard to know why but it may be due to browsing the web being slightly more complicated than browsing apps. For example, on the web more information is announced which can be both a good and a bad thing.

On a positive note you get more detailed information about elements. A heading level one <h1> on the web will be announced as "heading level one". On applications only "heading" is announced, not the level, as there is no means to include levels.

Apps, however, are simpler and less complex. Standard UI components that come with app UI libraries are natively accessible making the user experience much smoother.

Summary

People use mobile screen readers because they cannot see the screen or have a cognitive or learning disability, which means listening to content is helpful.

People who use mobile screen readers have many gestures at their disposal. These gestures are used in various combinations depending on what the person wants to do - read a section of content, scan a page for a section of interest, find out how a word is spelt, activate a link or button, and more besides.

Some people who use a mobile screen reader prefer to use apps over web content. Possibly because they are simpler to use and easier to make accessible.

Next steps

Read an inclusive approach to video production to learn more about how to produce accessible video and how our Consultancy service can help you achieve sustainable accessibility.

Updated Thursday 2 March 2023.

We like to listen

Wherever you are in your accessibility journey, get in touch if you have a project or idea.